How a Single Poisoned Python Package Nearly Compromised Every AI API Key on the Internet

The LiteLLM supply chain attack hit a package with 95M monthly downloads present in 36% of cloud environments — exposing how fragile the AI tooling trust chain really is.

📋Table of Contents

On March 24, 2026, someone published two poisoned versions of LiteLLM to PyPI. No code appeared on GitHub. No release tag. No pull request. No review. Just a quiet upload to the Python Package Index — and every machine that installed it started bleeding credentials within seconds.

LiteLLM isn't some obscure utility. It's the package that routes API keys for OpenAI, Anthropic, Google, and Amazon through a single proxy. It pulls 95 million downloads a month and sits inside 36% of cloud environments (Wiz, 2026). NASA uses it. Netflix uses it. Stripe and NVIDIA use it. The attacker picked the one package whose entire job is holding every AI credential in the organization in one place.

Here's what happened, how it happened, and what your team needs to do about it right now.

TL;DR: A threat group called TeamPCP poisoned LiteLLM (95M monthly PyPI downloads) by first compromising the Trivy security scanner, then chaining stolen credentials across five package ecosystems in two weeks. The malware auto-executed on install, harvesting every API key, SSH key, and cloud token on the machine. Sloppy code that crashed systems is the only reason thousands of companies aren't fully exfiltrated today.

What Exactly Happened to LiteLLM?

Malicious versions 1.82.7 and 1.82.8 were live on PyPI for approximately three hours — from 8:30 UTC to 11:25 UTC on March 24 — before PyPI quarantined them (BleepingComputer, 2026). Three hours doesn't sound like much. But LiteLLM averages 3.4 million downloads per day. Even a fraction of that window means thousands of compromised installs.

The poisoned package didn't wait for you to import it. It didn't need you to call a function. The malware fired the second the package existed on your machine — embedded in a file that Python's runtime executes automatically on startup.

What makes this different: Most supply chain attacks target packages people choose to install. This one targeted a package that sits underneath other tools as a transitive dependency. Developers who'd never heard of LiteLLM had it running on their machines because an IDE plugin or AI framework pulled it in silently.

The attacker published straight to PyPI with no corresponding source code on GitHub. No diff to review. No commit to audit. Just a binary package on the registry that looked identical to the real thing — except for the payload hiding inside.

Why LiteLLM Was the Perfect Target

Think about what LiteLLM does. It's a universal proxy for AI model APIs. That means it handles credentials for OpenAI, Anthropic, Google Cloud AI, Amazon Bedrock, Azure OpenAI, Cohere, and dozens more — all routed through a single library. Compromise LiteLLM once, and you don't get one API key. You get all of them. Every provider. Every team. Every environment where the package is installed.

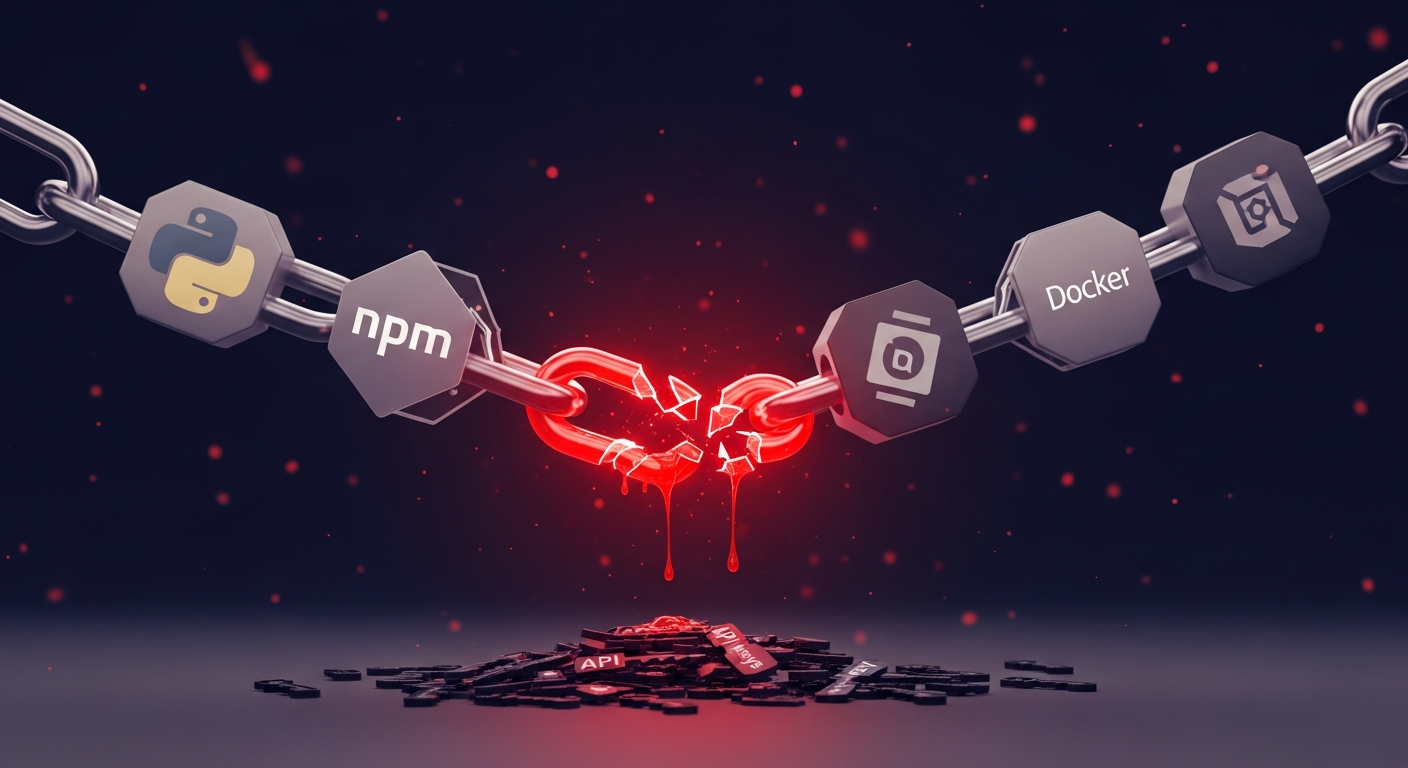

How Did TeamPCP Chain Five Ecosystems in Two Weeks?

The LiteLLM attack didn't start with LiteLLM. It started with Trivy — an open-source security scanning tool. Yes, a security product. On March 19, TeamPCP compromised Trivy's CI/CD pipeline (Snyk, 2026). The irony is staggering: the tool companies run to detect supply chain attacks became the entry point for one.

TeamPCP Attack Chain: 5 Ecosystems in 5 Days Each compromise provided credentials for the next target

Source: Wiz Research, ReversingLabs, Snyk — March 2026

Here's how the chain worked. Trivy's compromised CI pipeline gave TeamPCP access to credentials stored in GitHub Actions secrets. Those credentials unlocked Docker Hub publishing rights. Docker Hub access led to npm tokens. And npm tokens — combined with the CI credentials harvested along the way — gave them the PyPI publishing token for LiteLLM.

Each breach funded the next. Each ecosystem's trust model assumed the upstream ecosystem was secure. None of them were.

Supply chain attacks doubled in frequency during 2025, averaging 26 incidents per month (CyberSentriq, 2025). But this wasn't a typical smash-and-grab on a single registry. TeamPCP demonstrated that modern package ecosystems are interconnected in ways defenders haven't mapped — and attackers clearly have.

What Did the Three-Stage Payload Actually Do?

The malware didn't just grab a few environment variables. It operated in three distinct stages, each escalating the damage.

Stage 1: Credential Harvesting

The moment the package installed, it swept the machine for every secret it could find. SSH private keys. AWS and GCP tokens. Kubernetes service account credentials. Cryptocurrency wallet files. Every .env file on disk. Browser credential stores. The payload didn't discriminate — it grabbed everything and exfiltrated it to attacker-controlled infrastructure.

Stage 2: Container Deployment

With harvested Kubernetes credentials, the malware deployed privileged containers across every node in the cluster. Not just the compromised machine — the entire cluster. These containers ran with elevated permissions, giving TeamPCP root-level access to production infrastructure.

Stage 3: Persistent Backdoor

The final stage installed a lightweight backdoor that sat dormant, waiting for command-and-control instructions. Even if a team detected and removed the malicious package, the backdoor persisted independently. It was designed to survive incident response.

The critical detail: TeamPCP apparently "vibe coded" this malware — the implementation was so resource-hungry and poorly optimized that it crashed machines. A developer noticed their workstation consuming absurd amounts of RAM, investigated, and discovered the compromise. If the payload had been even marginally competent, it would've run silently for weeks across thousands of production environments.

After the attack, TeamPCP posted on Telegram: "Many of your favourite security tools and open-source projects will be targeted in the months to come… stay tuned." They aren't done.

Why a Cursor MCP Plugin Was the Entry Point Nobody Saw

Here's the part that should concern every engineering leader: the developer who discovered this attack never installed LiteLLM. They didn't add it to their requirements file. They didn't run pip install litellm. It arrived as a transitive dependency — pulled in by a Cursor MCP plugin they didn't even know they had.

Sonatype's 2026 report found 877,522 malicious packages in open-source repositories last year alone, up from roughly 512,000 the year before (Sonatype, 2026). That's the haystack. Now consider that 95% of vulnerable component downloads already had a fix available — teams just didn't know they were pulling in the vulnerable version (Sonatype, 2026).

0 300K 600K 900K

Malicious Packages in Open-Source Repos (Year-over-Year) Source: Sonatype State of Software Supply Chain, 2026

The dependency chain problem isn't new. But AI tooling has made it dramatically worse. A single AI coding assistant plugin can pull in dozens of packages you've never audited. LiteLLM alone sits underneath countless AI frameworks, agent libraries, and IDE extensions as an invisible transitive dependency. You don't choose it. It chooses you.

The AI Supply Chain Blind Spot Getting Wider Every Quarter

Seventy-eight percent of enterprises adopted AI tools in 2025 (McKinsey, 2025). Gartner projects 40% of enterprise applications will feature task-specific AI agents by 2026, up from less than 5% in 2025 (Gartner, 2025). Every one of those agents sits on top of a dependency stack that nobody's auditing with the same rigor applied to first-party code.

The numbers tell a clear story. Eighty-six percent of commercial codebases contain vulnerable open-source components, and 81% of those have high- or critical-risk vulnerabilities (Black Duck, 2025). Third-party involvement in breaches doubled in a single year — from 15% to 30% — according to the Verizon 2025 DBIR (Verizon, 2025).

$0B $50B $100B $150B

2023 2024 2025 2026 2028 2030 2031

$46B $52B $60B $69B $91B $120B $138B

Global Cost of Supply Chain Attacks ($B) Source: Cybersecurity Ventures, 2025

Software supply chain attacks are projected to cost $60 billion globally in 2025, growing to $138 billion by 2031 at a 15% compound annual rate (Cybersecurity Ventures, 2025). And the average supply chain breach takes 254 days to detect and contain (DeepStrike, 2025). TeamPCP's sloppy code cut that to three hours — by accident.

The companies deploying AI the fastest right now have the least visibility into what's underneath it. Every AI agent, copilot, and internal tool shipped this year runs on hundreds of packages exactly like LiteLLM. How many of them have you actually audited?

What CTOs and Security Teams Should Do This Week

The LiteLLM attack wasn't sophisticated in execution — it was sophisticated in target selection. Defending against the next one requires changes at multiple levels. Here's what matters most, starting today.

Audit Your AI Dependency Chain

Don't just scan your requirements.txt or package.json. Map the full transitive dependency tree for every AI tool, IDE plugin, and agent framework your team uses. Specifically look for packages that handle credentials, API keys, or authentication tokens. Those are the high-value targets attackers will hit next.

Verify Source-to-Registry Consistency

The poisoned LiteLLM versions existed on PyPI but not on GitHub. That's a massive red flag your tooling should catch. Implement checks that flag any package version where the published artifact doesn't match a tagged release in the source repository.

Pin Versions and Verify Checksums

Stop using version ranges for security-sensitive dependencies. Pin exact versions. Verify checksums. Use lock files. Yes, it creates maintenance overhead. That overhead is cheaper than a full credential rotation across every AI service your organization uses.

Monitor for Anomalous Runtime Behavior

The LiteLLM malware was caught because it crashed machines. You can't rely on attackers being sloppy. Deploy runtime monitoring that flags unexpected network connections, unusual file access patterns (especially reads of .env, .ssh, and credential stores), and abnormal resource consumption.

Segment AI Tool Permissions

AI coding assistants and agent frameworks shouldn't have access to production credentials, Kubernetes cluster admin tokens, or SSH keys for infrastructure. Run AI tools in sandboxed environments with minimal permissions. If an agent needs API keys, provision scoped tokens with short TTLs — not master keys.

[INTERNAL-LINK: security assessment services → e10 Infotech cybersecurity services page]

Frequently Asked Questions

Was my organization affected by the LiteLLM attack?

The malicious versions (1.82.7 and 1.82.8) were live for approximately three hours on March 24, 2026 (BleepingComputer, 2026). Check your pip install logs and lock files for those specific versions. If they're present, assume full credential compromise and begin rotation immediately.

How did TeamPCP get access to publish LiteLLM packages?

They compromised Trivy's CI/CD pipeline first on March 19, then chained stolen credentials through GitHub Actions, Docker Hub, and npm to reach the PyPI publishing token for LiteLLM (Wiz, 2026). It wasn't a single breach — it was a cascade across five package ecosystems.

Can SCA tools like Snyk or Dependabot prevent this type of attack?

Software Composition Analysis tools catch known vulnerabilities, but this was a zero-day supply chain injection — the package itself was malicious. SCA tools won't flag a legitimate package name publishing a new version. You need source-to-registry verification and runtime behavior monitoring in addition to traditional SCA scanning.

What makes AI packages particularly high-value targets for attackers?

AI proxy libraries like LiteLLM hold credentials for multiple cloud AI providers simultaneously. One compromise yields API keys for OpenAI, Anthropic, Google, Amazon, and more. With 78% of enterprises now using AI tools (McKinsey, 2025), the blast radius of a single package compromise has grown exponentially.

Is TeamPCP still active?

Yes. After the attack, TeamPCP posted on Telegram stating that "many of your favourite security tools and open-source projects will be targeted in the months to come." They've demonstrated capability across five package ecosystems and should be treated as an active, persistent threat.

[INTERNAL-LINK: cybersecurity consulting → e10 Infotech security services]

The Trust Model Is Broken — What Comes Next

The LiteLLM attack exposed something the security community has warned about for years: open-source trust chains weren't built for a world where AI tools pull in dozens of invisible dependencies, each one a potential attack surface.

Here's what matters:

- One compromised maintainer account turned five package ecosystems into a credential harvesting operation

- The malware was caught by accident — sloppy code crashed a developer's machine, not because any security tool flagged it

- Transitive dependencies are the real threat surface — the developer who found it never chose to install LiteLLM

- AI tooling amplifies the blast radius — a single AI proxy package holds keys to every AI provider an organization uses

- TeamPCP isn't done — they've publicly committed to targeting more security tools and open-source projects

The companies shipping AI agents fastest need to slow down long enough to understand what's underneath them. Audit your dependency chains. Verify your package sources. Segment your credentials. And assume that the next attack won't be kind enough to crash your machine as a warning.

[INTERNAL-LINK: schedule a security review → e10 Infotech contact page]